Missing nodes

One workflow works on your machine, then breaks everywhere else.

Package dependenciesUntil now.

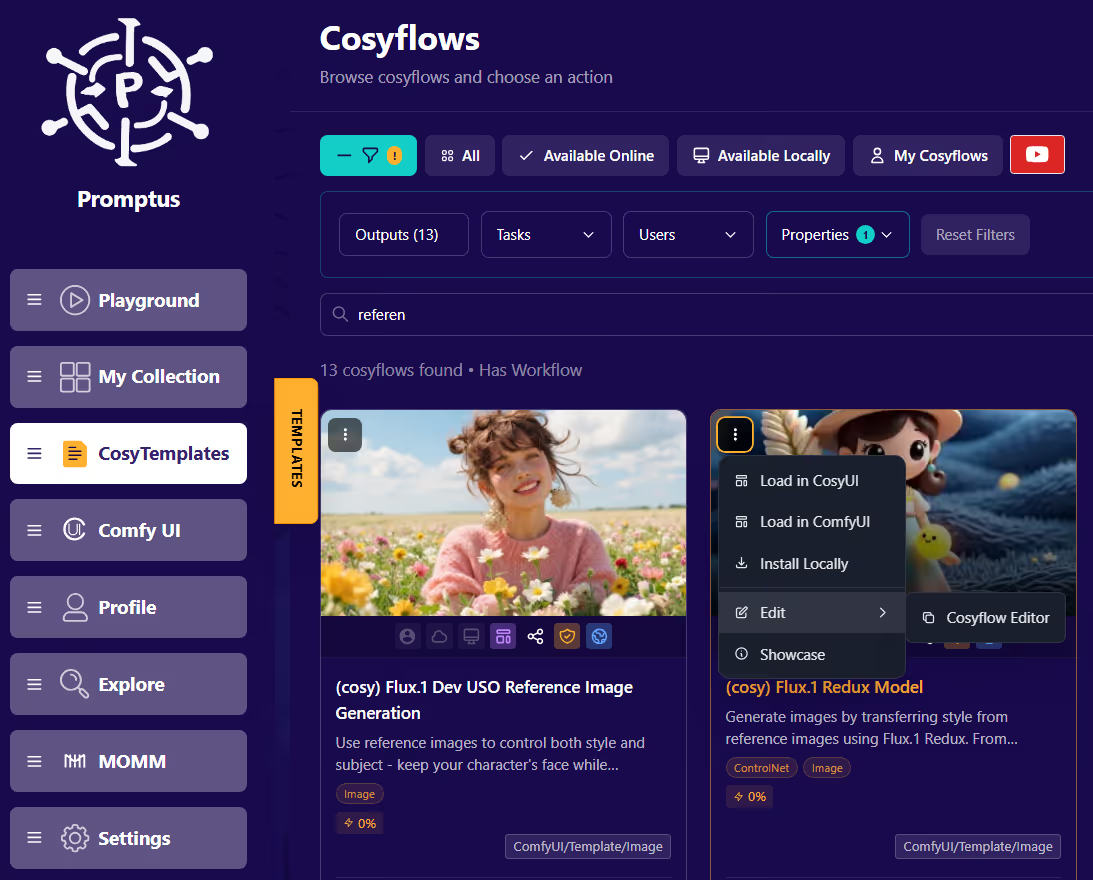

Promptus helps AI creators turn fragile workflows into reusable tools — without giving up local control.

One workflow works on your machine, then breaks everywhere else.

Package dependenciesYour best workflows end up buried in folders and hard to reuse.

Make runnable toolsStay local when you want. Use cloud GPUs only when needed.

Local first, cloud optionalBring the workflow. Package the moving parts. Run it where it makes sense.

Master Promptus to generate locally, privately, and unlock zero-limit creation.

Promptus includes its own GPU server inside the desktop app. You don't need to manually install CUDA, Python, or other technical dependencies.

Generate images, videos, music, 3D, audio and more privately on your hardware without using cloud servers.

Download the models you want to use locally. You can select from Cosyflow workflows and install your own models from Huggingface.

Local models only appear when you're in Local Mode. Can create privately and disable Safety Mode.

A1111 is still a solid tool for prompt-based image generation. But newer model architectures like FLUX and SD 3.5 run better elsewhere. If you want to keep using your A1111 while running ComfyUI workflows — packaged, shareable, and local-first — that's exactly what Promptus is built for.

A1111 ties your workflow to your local Python environment, extension versions, and model paths. Move it to another machine and something almost always breaks — a missing extension, a different CUDA version, a wrong folder structure. Promptus solves this with CosyFlows: packaged workflows that include their inputs, defaults, and dependencies so they run the same way every time, on any supported machine.

A1111 is prompt-first — you type, adjust sliders, and generate. ComfyUI is node-based — you wire together a full pipeline with granular control over every step. ComfyUI is more powerful but harder to share and run reliably. With Promptus you can imports ComfyUI workflows and wraps them into a clean interface so you get the power of ComfyUI without managing the node graph yourself.

Promptus you can download and runs entirely on your hardware in Local Mode. Once you install the built-in ComfyUI GPU server and download a model, you generate images, video, audio, and 3D locally with no cloud costs, no usage caps, and no data leaving your machine.

In A1111, sharing a workflow usually means handing someone a folder and hoping they have the same extensions installed. In Promptus, you package your workflow as a CosyFlow — a self-contained bundle with inputs, defaults, and model references baked in. Anyone with Promptus can run it without setup, debugging, or digging through folder paths.